AI for Near Real-Time Crop Monitoring—A New Method

- Post by: Jean Bouchat

- November 27, 2025

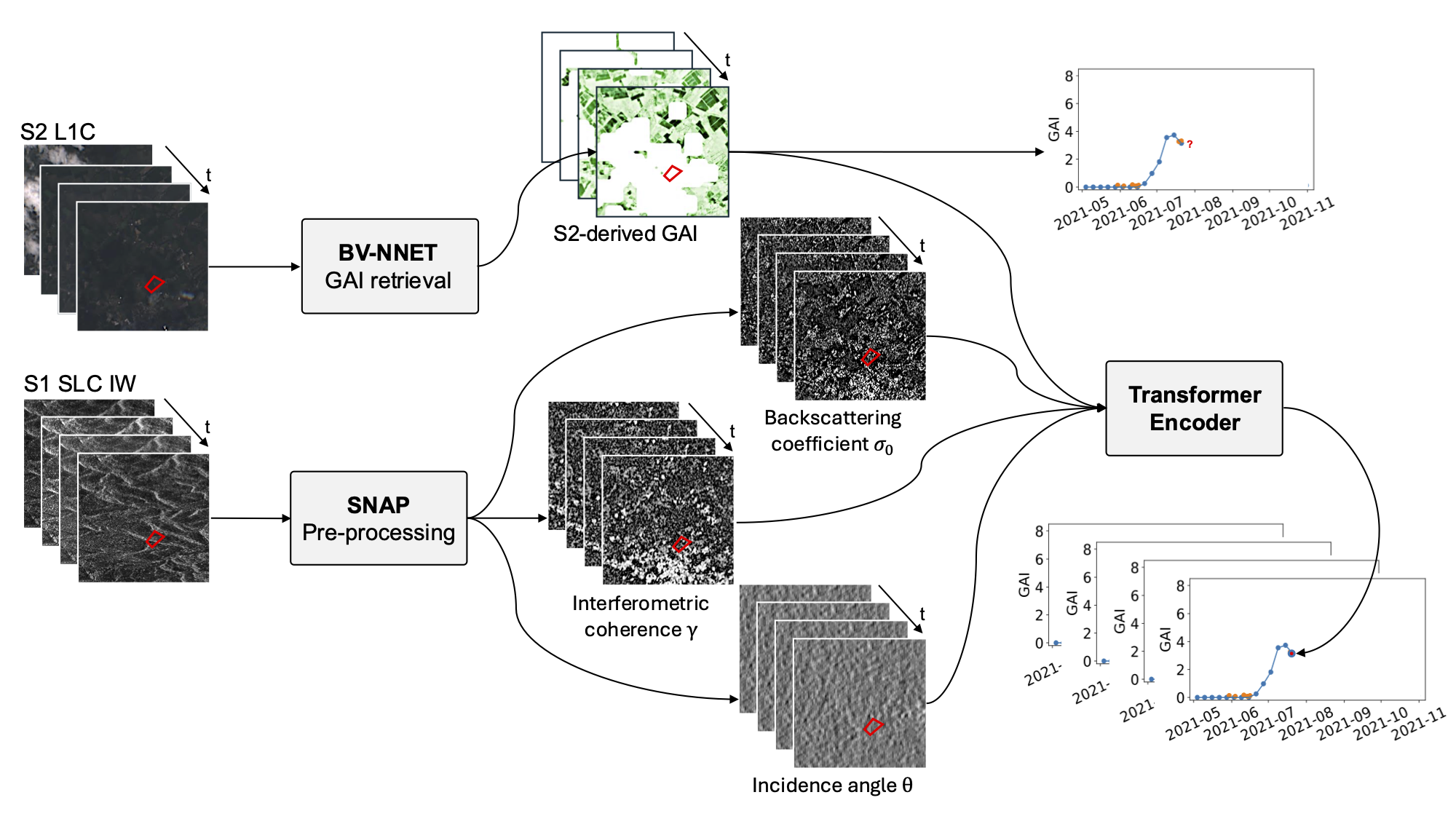

Researchers from the Earth System Science Hub and the Earth and Life Institute at UCLouvain, Belgium, have developed a new AI-based method capable of retrieving the Green Area Index (GAI) of maize fields in near real-time, even when cloud cover prevents the use of optical images. The work, published in IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, introduces a transformer-based approach that fuses Sentinel-1 SAR observations with Sentinel-2 optical data to overcome one of the most persistent limitations in crop monitoring: the unreliable availability of cloud-free optical images during key stages of the growing season.

The GAI is a biophysical variable of crucial importance for assessing crop development, modelling water and carbon fluxes, and improving yield estimation. Although GAI is typically retrieved from optical satellite imagery, such data are frequently unavailable due to cloud cover—precisely during the periods when vegetation is actively growing.

The new approach addresses this problem by exploiting the ability of synthetic aperture radar (SAR) to penetrate clouds and observe the canopy regardless of weather conditions.

Study Area

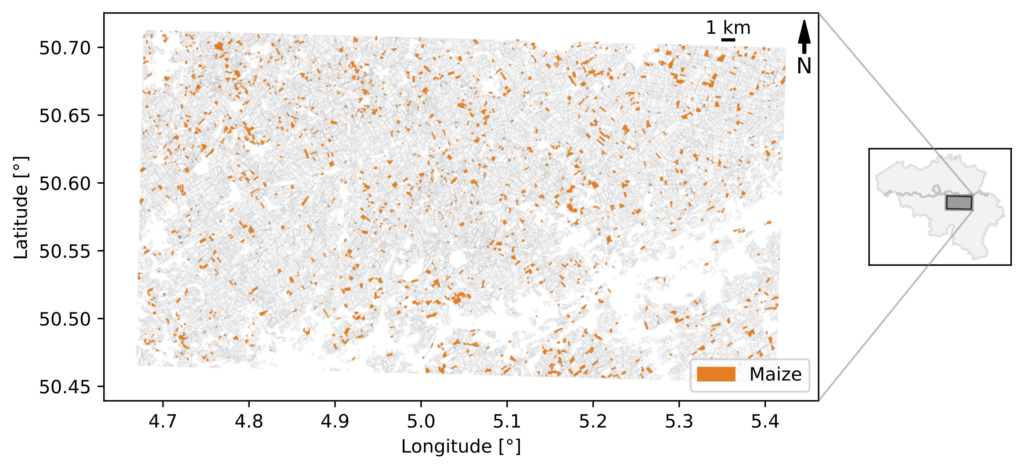

The method was tested over the Hesbaye region of Belgium, a 53 x 27.5 km agricultural area with relatively uniform soils and large, homogeneous fields. Four years of Sentinel-1 and Sentinel-2 observations (2018–2021) were used for training and validation.

Method Overview

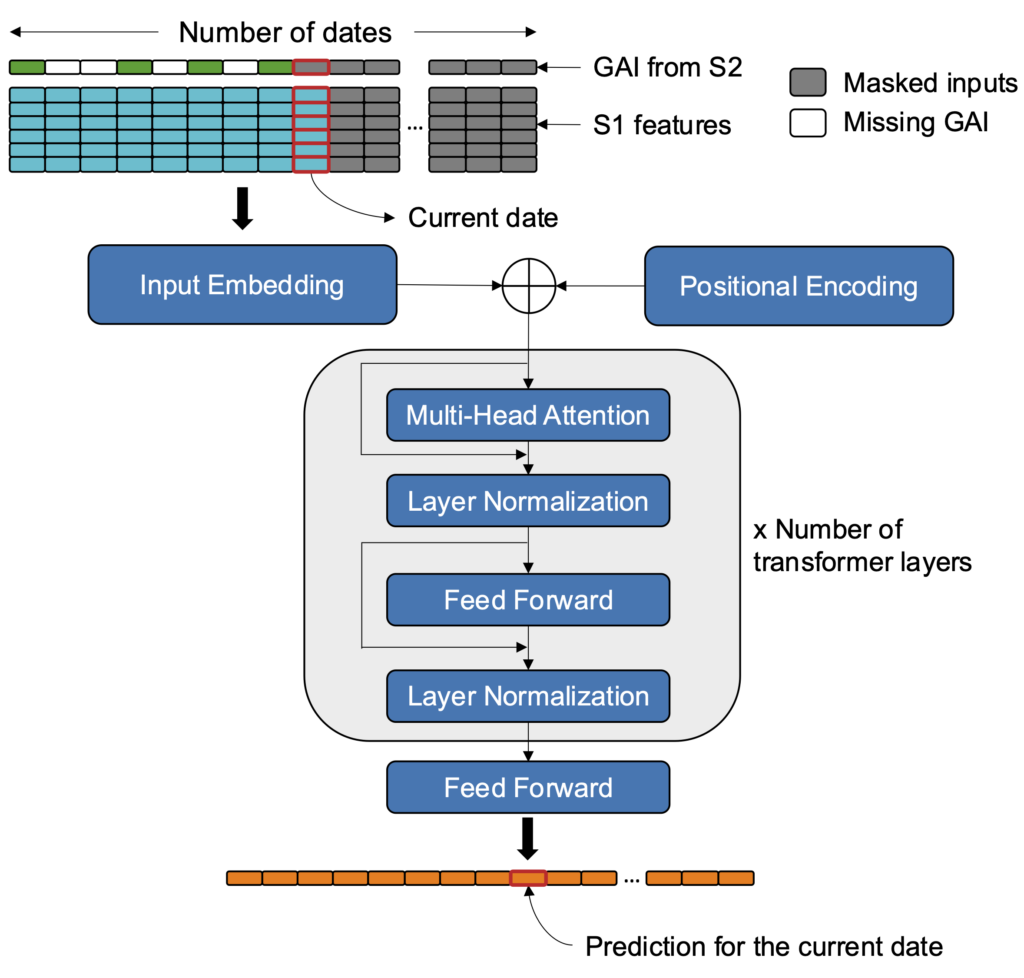

Instead of reconstructing optical reflectance from SAR—which can introduce significant modeling errors—the method directly predicts the missing GAI value at the time when an optical observation is unavailable. The model uses:

- past and present Sentinel-1 SAR features (backscatter, interferometric coherence, and radar vegetation indices),

- past Sentinel-2-derived GAI values when available,

- and the temporal dynamics of crop development learned over multiple years.

A transformer encoder was selected due to its ability to capture sequential patterns, long-range temporal dependencies, and nonlinear relationships between GAI and the SAR features.

Performance and Validation

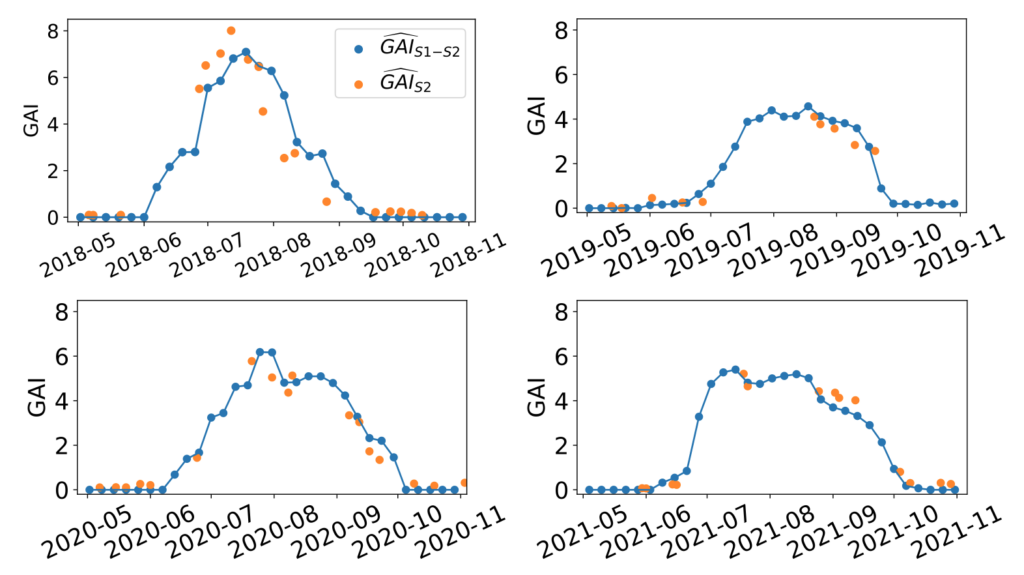

A nested cross-validation strategy was used to ensure the model generalizes well across years rather than (over)fitting to a single growing season. When validated on an unseen year, the model achieved R² of 0.88 and RMSE of 0.71.

These results demonstrate that the method can reliably retrieve GAI at the parcel level in operational conditions.

An analysis of feature importance confirmed that past Sentinel-1 information provides substantial predictive power, especially during long cloud-induced gaps in optical data. The model also responded correctly to simulated unexpected events, such as early harvests, indicating that it does not merely extrapolate past optical behavior but actively interprets the radar signal.

An analysis of feature importance confirmed that past Sentinel-1 information provides substantial predictive power, especially during long cloud-induced gaps in optical data. The model also responded correctly to simulated unexpected events, such as early harvests, indicating that it does not merely extrapolate past optical behavior but actively interprets the radar signal.

Implications for Crop Monitoring

The results highlight the potential of transformer-based fusion to deliver consistent, all-weather estimates of crop canopy development. By filling the gaps left by cloud-covered optical images, the approach directly benefits:

- continuous monitoring of crop status at field scale, and

- early warning systems in regions where agricultural decisions depend on timely information.

The recent launch of NISAR (and in the future ROSE-L) with its L-band SAR will further expand these capabilities, enabling even more accurate retrievals in high-biomass crops and laying the foundation for next-generation, all-weather vegetation monitoring systems.

Link to the article: https://doi.org/10.1109/JSTARS.2025.3622750